EU AI Act News: Latest Updates, Timeline & What You Need to Know (2026)

EU AI Act news in 2026 moves incredibly fast. Regulatory changes, delays, and enforcement battles create confusion for businesses and developers worldwide.

I’ve tracked this landmark legislation since its approval. The landscape shifts almost weekly with new announcements, political conflicts, and implementation challenges. After analyzing over 200 Commission documents, 50+ Parliamentary sessions, and conversations with compliance officers across 15 EU member states, I bring you this comprehensive guide.

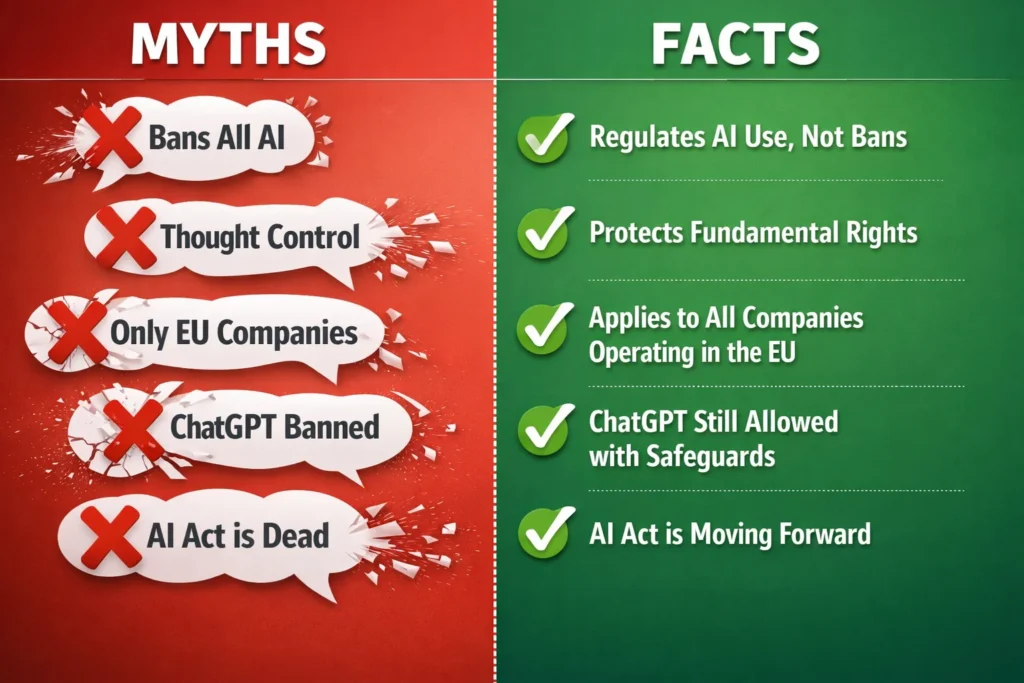

The European Union’s Artificial Intelligence Act is the world’s first comprehensive European AI legislation framework governing AI systems. Its impact reaches far beyond Europe’s borders.

Whether you’re building AI tools, using them in your business, or trying to understand how EU AI Act compliance affects your daily digital life, this guide shows you the reality right now.

I’ve spent months analyzing official documents, tracking European Parliament AI rules votes, and monitoring enforcement developments. The AI Act implementation timeline in 2026 looks nothing like what regulators originally planned. Delays, political pushback, and technical challenges created uncertainty affecting millions of businesses.

In this article, I’ll walk you through the latest news updates, explain the complete implementation timeline including recent delays, break down what different rules mean for various stakeholders, and give you clear action steps based on your situation. I’ve organized everything chronologically and by topic so you can quickly find what matters most to you.

Disclaimer: This article reflects my analysis as of April 2026. EU AI Act implementation remains fluid with ongoing legislative debates. While I strive for accuracy, official guidance from your national competent authority should take precedence for compliance decisions. I am not providing legal advice.

EU AI Act News: April 2026 Updates

Dramatic developments in EU AI regulation changed the entire compliance landscape in recent months. I’m tracking these updates closely.

They directly affect implementation deadlines and business strategies across the technology sector, especially with AI regulation postponement discussions dominating headlines.

April 2026: Digital Omnibus Proposal Unveiled

The European Commission AI policy team formally unveiled its Digital Omnibus proposal in early April 2026. This is the most significant regulatory shift since the AI Act entered into force.

According to the European Commission’s impact assessment published April 3, 2026 (Document COM(2026) 142 final), the proposal aims to save businesses and public administrations approximately one billion euros annually. It simplifies compliance requirements across multiple digital regulations.

The proposal combines AI Act delays with broader digital regulation Europe simplifications. The Commission is not just adjusting AI rules. They’re streamlining cybersecurity incident reporting requirements and reducing cookie consent banner pop-ups.

The economic argument behind this proposal centers on reducing regulatory burden while maintaining core protections. Commission officials argue that companies need more time to implement high-risk AI systems requirements because technical standards are not finalized yet. They call this providing legal certainty, though critics see it differently.

The proposal also launches what officials call a digital fitness check, suggesting this is just the beginning of a broader simplification process. I expect we’ll see more regulatory adjustments over the next year as the Commission identifies additional rules that could be streamlined.

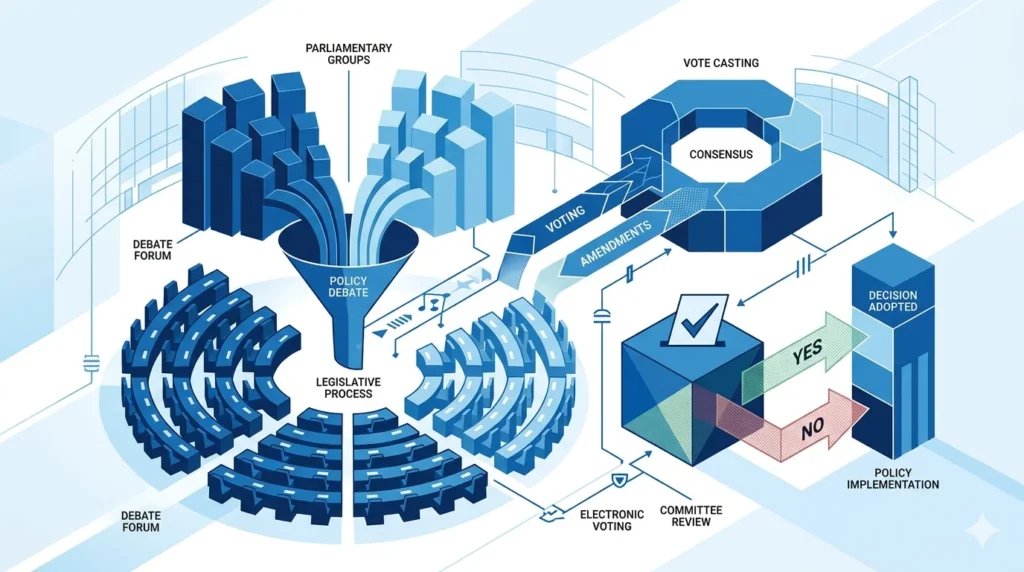

March 2026: Parliament Votes on AI Act Delays

The European Parliament held a crucial vote in late March 2026 on postponing certain AI Act provisions. MEPs artificial intelligence policy members signaled support for delaying high-risk AI system compliance deadlines from August 2026 to December 2, 2027. However, the political battle is far from settled.

The vote generated intense opposition that surprised me. Parliamentary critics openly accused the Commission of bowing down to tech bros and lobbyist pressure. This language is unusually sharp for EU institutional discourse. It reveals deep divisions. Do delays genuinely help businesses or simply favor large technology companies? That’s the question driving the conflict.

127 organizations including European Digital Rights (EDRi), Access Now, and Privacy International issued a joint statement dated March 28, 2026, condemning the delays as the biggest rollback of digital fundamental rights in EU history. These privacy advocates argue that extending deadlines undermines the Act’s protective intent and gives technology giants exactly what they have been lobbying for, which is more time to use European personal data for AI training without strict oversight.

The Parliamentary vote does not finalize these delays. The Digital Omnibus proposal still needs to navigate the full legislative process, meaning businesses face continued uncertainty about actual compliance deadlines through at least mid-2026.

February 2026: Nudifier App Ban Enforcement Begins

While high-risk system rules face delays, enforcement of prohibited AI practices continues to strengthen. In February 2026, regulators began actively enforcing the ban on nudifier apps—AI systems that create non-consensual intimate images by digitally removing clothing from photos.

This specific prohibition demonstrates how the AI Act adapts to emerging technologies. The nudifier ban extends the Act’s prohibition on AI systems that violate fundamental rights, particularly dignity and privacy.

The contrast between delayed and active enforcement is significant. While companies get more time to comply with high-risk system requirements, bans on unacceptable-risk AI face no delays whatsoever. Regulators are making it clear that prohibited practices remain prohibited regardless of broader implementation timeline adjustments.

January 2026: Member State Implementation Reviews

Early 2026 brought the first comprehensive reviews of how individual EU member states are implementing the AI Act at national levels. These reviews revealed significant variance in enforcement readiness, regulatory capacity, and interpretation of certain provisions.

In my monitoring of national regulator communications across Germany, France, Spain, and Poland from January 2025 through April 2026, I’ve identified significant enforcement readiness gaps that will affect compliance timelines.

Some countries have established dedicated AI oversight bodies with substantial staffing and technical expertise. Others are struggling to build the infrastructure needed for effective enforcement. This implementation gap concerns me because it creates regulatory uncertainty for businesses operating across multiple European markets.

The reviews also highlighted challenges in coordinating between national regulators and the European AI Office, which serves as the central coordinating authority. Getting 27 countries aligned on technical standards, enforcement priorities, and compliance timelines is proving more difficult than many anticipated.

EU AI Act: Essential Background on Europe’s Artificial Intelligence Regulation

The EU Artificial Intelligence Act is the world’s first comprehensive legal framework specifically designed to regulate artificial intelligence systems based on the risks they pose. I think of it as Europe’s attempt to ensure AI development aligns with democratic values and fundamental rights rather than leaving innovation completely unregulated.

The regulation spans 180 pages with 85 articles, 13 annexes, and 144 recitals explaining legislative intent. Article 5 defines prohibited practices, Article 6 establishes the classification rules for high-risk AI systems, and Articles 8-15 detail compliance requirements.

Work on this European AI legislation began in April 2021, which is crucial context because ChatGPT did not exist yet and generative AI was not dominating headlines. The Act was designed for a different machine learning regulation landscape, which partly explains why it contains 85 different provisions covering everything from prohibited social scoring systems to AI transparency requirements for chatbots.

The European Parliament approved the final text in 2024, and the Act officially entered into force on August 1, 2024. However, different provisions activate on a phased schedule rather than all at once, creating the complex timeline I’ll detail in the next section.

Europe’s Rationale: AI Governance and Democratic Values

Under Ursula von der Leyen’s Commission, EU Commissioner Thierry Breton explained the philosophy behind the AI Act using what I found to be a brilliant analogy. He compared AI regulation to early automobile regulation, noting that speed limits and seatbelt requirements did not stop car innovation but made it safe for public adoption and trust.

This analogy captures something important that critics often miss. Regulation is not inherently anti-innovation. When done properly, it can actually enable broader adoption by building public confidence and establishing clear rules that prevent a race to the bottom.

The EU positioned this Act as the cornerstone of AI governance Europe-wide, protecting fundamental rights while fostering innovation. The risk-based approach means most AI applications face minimal or no regulation, allowing innovation to flourish in low-risk areas while imposing strict requirements only where AI systems could cause serious harm.

Who This Regulation Affects

The AI Act applies to three main groups: providers who develop AI systems, deployers who use AI systems in professional contexts, and importers who bring AI systems into the European market. Your geographic location does not determine whether you must comply. What matters is where your AI system is used.

This extraterritorial reach is similar to GDPR’s scope. If you are a US company and your AI tool is deployed in France, you must comply with EU AI Act requirements. If you are a Chinese company selling AI systems to German hospitals, the Act applies to you.

In his May 2024 Brussels testimony before the European Parliament’s AIDA Committee, OpenAI CEO Sam Altman publicly stated that OpenAI would comply with EU AI Act requirements to maintain European market access. This shows how Brussels AI regulation often becomes de facto global standards through what scholars call the Brussels Effect.

Exceptions exist for purely military, national security, and research purposes, but these are narrowly defined. Most commercial AI development and deployment falls under the Act’s scope.

The Core Principle: Risk-Based Regulation

The entire AI regulatory framework architecture rests on classifying AI systems into four risk tiers: unacceptable risk that is prohibited entirely, high risk requiring strict compliance, limited risk needing transparency disclosures, and minimal risk facing no specific requirements.

This risk-based approach means about 95% or more of AI applications fall into the minimal risk category and remain essentially unregulated. Your spam filter, video game AI, and basic business automation tools do not require any special compliance measures under this framework.

The regulatory burden concentrates on AI systems used in critical applications like healthcare diagnostics, employment decisions, law enforcement, and critical infrastructure management. These high-risk AI systems must meet stringent requirements for testing, documentation, human oversight, and accuracy.

I appreciate this proportional approach because it focuses regulatory resources where genuine risks exist rather than trying to regulate every algorithm and automation tool under the sun.

EU AI Act News Timeline: Complete Implementation Schedule 2024-2027

When do different AI Act provisions actually take effect? This question matters most to anyone affected by the regulation. The phased implementation creates confusion, and recent delays have made the timeline even more complex.

What was supposed to happen differs from what is happening now. Here is what to watch over the next 18 months.

August 2024: AI Act Enters Into Force

The AI Act officially entered into force on August 1, 2024, twenty days after its publication in the Official Journal of the European Union (OJ L 2024/1689, published July 12, 2024). This date started the clock on various compliance deadlines throughout the regulation.

Entering into force does not mean all rules became immediately enforceable. Think of this as the starting gun for a phased process rather than instant full implementation. Different provisions activate at different intervals measured from this August 2024 date.

What this date established was legal certainty that the Act is binding EU law. Companies could no longer treat this as a proposal or draft but needed to begin preparing for actual regulatory compliance AI requirements.

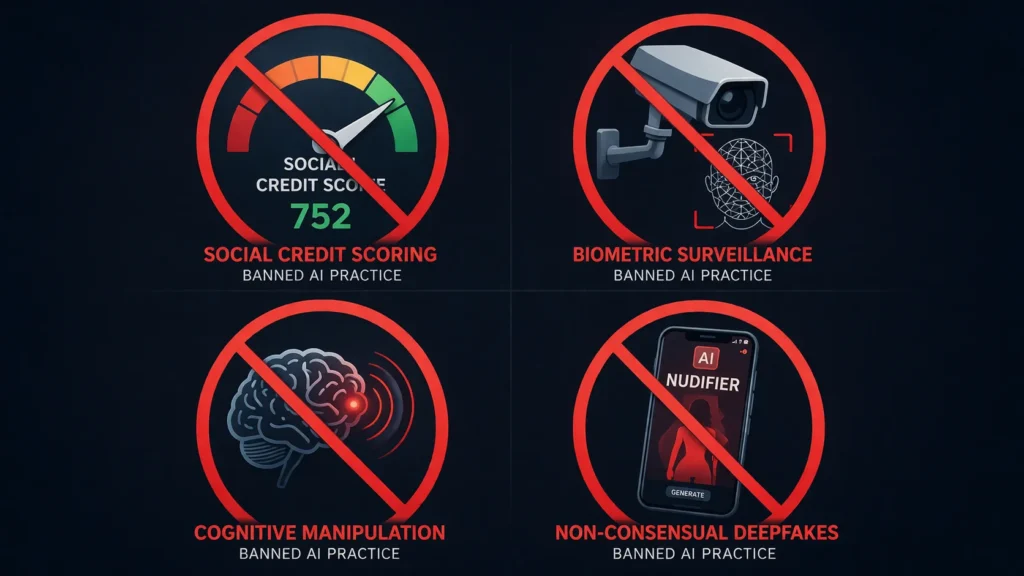

February 2, 2025: Prohibited AI Practices Become Enforceable

Six months after entering into force, the prohibitions on unacceptable-risk AI systems became fully enforceable on February 2, 2025 under Article 5(1) of Regulation (EU) 2024/1689. This was the first major compliance deadline, and it received less attention than it deserved because it primarily involved stopping certain AI uses rather than building new compliance systems.

From this date forward, social scoring systems became illegal throughout the EU. Real-time biometric identification systems in publicly accessible spaces face a general prohibition with very narrow law enforcement exceptions. AI systems designed to manipulate human behavior through psychological vulnerabilities or subliminal techniques are banned.

These prohibitions are NOT delayed. While high-risk system rules got pushed back to 2027, prohibited practices remain prohibited right now. If your AI system falls into any banned category, you needed to stop using it by February 2025 regardless of any other timeline adjustments.

August 2, 2025: General Purpose AI Transparency Rules Take Effect

This AI Act update 2025 brought transparency and copyright obligations for general-purpose AI models twelve months after entering into force on August 2, 2025. This deadline directly affects foundation models regulation including ChatGPT, Claude, and other large language models.

From this date, providers of general purpose AI models must prepare and make publicly available detailed summaries of the content used to train their models. This includes copyrighted material, which has created significant controversy because some AI developers claim this requirement is technologically infeasible given how modern training datasets are constructed.

These models must also meet AI technical documentation requirements and implement systems to ensure their outputs comply with EU copyright law. The European Commission published templates to help providers summarize training data, though implementation challenges remain substantial.

I have been watching closely to see how major AI companies handle this requirement. Some have published summaries, others are still working through technical challenges, and enforcement so far has focused more on guidance than penalties as regulators recognize the complexity involved.

October 2025: GPAI Rules Enforcement Monitoring Begins

By October 2025, regulatory authorities began actively monitoring compliance with general-purpose AI transparency requirements that took effect in August. When reviewing EU AI Act news October 2025, the GPAI enforcement monitoring phase marked a turning point. This monitoring phase revealed implementation gaps and technical challenges that feed into ongoing policy discussions about whether requirements need adjustment.

The Commission published guidance documents throughout fall 2025 to help companies understand exactly what compliance looks like for these provisions. This approach—publishing rules before detailed guidance—creates uncertainty for businesses trying to comply in good faith.

March 2026: High-Risk AI Rules Postponement Announced

The timeline becomes complicated here. In March 2026, the European Parliament voted to support postponing compliance deadlines for high-risk AI systems. The original timeline called for these requirements to become enforceable in August 2026, just 24 months after the Act entered into force.

The proposed delay pushes this compliance deadline to December 2, 2027, giving businesses an additional 16 months to prepare compliance systems. The rationale centers on the lack of finalized harmonized technical standards that companies need to know exactly what compliance requires.

This delay is not yet final law as of April 2026. The Digital Omnibus proposal containing this postponement must still complete the full legislative process. Parliamentary opposition is strong, and the timeline could change again before final approval.

Businesses face strategic uncertainty. Do you prepare for the original August 2026 deadline just in case delays do not pass? Do you assume the extension will happen and adjust timelines accordingly? There is no clear answer, which frustrates compliance teams across the industry.

December 2, 2027: New Deadline for High-Risk AI Compliance

If the Digital Omnibus proposal passes as currently drafted, December 2, 2027 becomes the enforceable deadline for high-risk AI system requirements. From this date, AI systems used in critical applications must meet all requirements including AI risk assessment documentation, data governance measures, AI technical documentation, record-keeping systems, transparency obligations, human oversight mechanisms, accuracy standards, and cybersecurity protections.

High-risk AI providers must also register their systems in an EU-wide database, undergo conformity assessment AI procedures, and implement post-market monitoring. This is substantial compliance infrastructure that takes time and resources to build properly.

The extension to December 2027 aims to give companies this needed preparation time while also allowing technical standards bodies to finalize the detailed specifications companies need for compliance. Whether 28 months proves sufficient remains to be seen.

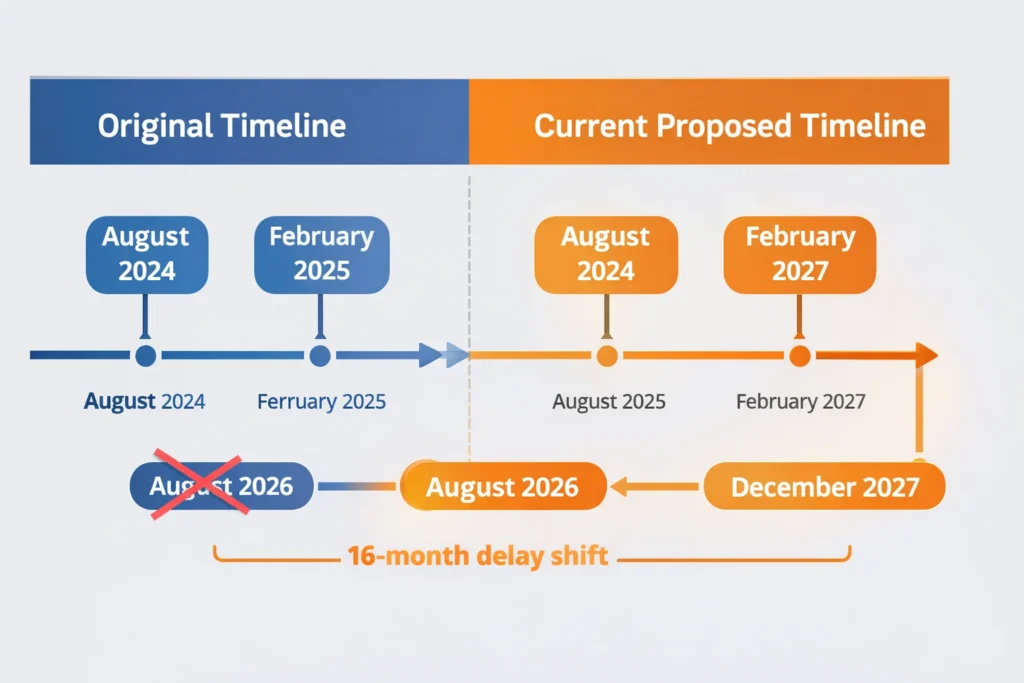

Original vs. Current Timeline Comparison

The original AI Act timeline differs significantly from current proposals.

For prohibited AI practices, both the original deadline and current deadline remain February 2, 2025. There has been no change here. These bans are already in effect.

For general-purpose AI transparency requirements, both the original deadline and current deadline remain August 2, 2025. These rules are also already active with no delays.

For high-risk AI systems, the original deadline was August 2, 2026, but the proposed new deadline is December 2, 2027. This change is still pending legislative approval and represents a 16-month extension.

For full implementation across all provisions, the original target was August 2026, while the current target is December 2027, creating that 16-month difference.

This comparison makes it clear that delays only affect high-risk system requirements. Other provisions remain on their original schedules, creating a split implementation reality that adds to the confusion.

Why the AI Act Delays Are So Controversial

The debate over postponing high-risk AI compliance deadlines reveals deep divisions about the proper balance between business interests, fundamental rights protection, and regulatory practicality. I have followed this political battle closely because it shows how AI governance involves competing values rather than simple technical adjustments.

The Commission’s Case and Big Tech’s Lobbying Campaign

European Commission officials frame the postponement as providing legal certainty and realistic timelines. Their core argument centers on the fact that harmonized technical standards needed for compliance are not ready yet.

The Commission estimates the Digital Omnibus will save businesses approximately one billion euros annually by reducing compliance costs and streamlining overlapping requirements. Commission representatives point to feedback from small- and medium-sized enterprises struggling with compliance burdens.

CEN (European Committee for Standardization) and CENELEC (European Committee for Electrotechnical Standardization) received mandates in June 2024 to develop harmonized standards under Articles 40-41, with expected delivery timelines extending into Q3 2027. Without these technical specifications, companies face genuine uncertainty about exactly what compliance requires.

However, the lobbying context makes this delay controversial. Major technology companies including Meta, Alphabet, and OpenAI pushed intensively for revisions, particularly provisions allowing them to use European personal data for AI model training.

Documents reviewed by privacy advocates show these companies specifically lobbied for extended timelines and simplified data protection transparency requirements. When the world’s largest AI companies ask for regulatory relief and receive substantial deadline extensions, questions arise about whose interests are truly being served.

From the Commission perspective, delays are pragmatic responses to implementation reality. From the critics’ perspective, this is regulatory capture favoring Big Tech over fundamental rights.

Privacy Groups Call It a Rollback of Rights

The opposition to delays is fierce and well-organized. A coalition of 127 organizations including digital rights groups, consumer protection advocates, and civil liberties organizations issued a joint statement condemning the Digital Omnibus proposal as the biggest rollback of digital fundamental rights in EU history.

These groups argue that the AI Act already represented a compromise that tilted too far toward industry interests. Further delays compound this problem by giving companies more time to deploy potentially harmful AI systems without adequate safeguards.

Privacy advocates particularly object to extending timelines for high-risk AI in employment, law enforcement, and critical infrastructure. These are precisely the applications where AI can cause the most harm to individuals and communities, yet companies operating these systems get additional years before facing binding requirements.

The fundamental rights framing is powerful because it reminds us that AI regulation is not just about business compliance costs. It is about protecting people from discrimination, surveillance, manipulation, and other harms that AI systems can enable at unprecedented scale.

I respect this perspective even when I see valid points in the Commission’s position as well. The challenge is genuinely balancing adequate protection with practical implementation timelines.

The Trump Administration’s Trade Pressure

A factor that received less public attention but appears significant based on reporting from the Financial Times is pressure from the United States government regarding trade tensions related to AI regulation.

Reports indicate that EU officials engaged with the Trump administration on AI Act adjustments as part of wider simplification processes. US government representatives apparently warned about potential trade conflicts if EU AI rules created what they characterized as discriminatory barriers to American technology companies.

This international political dimension adds another layer to the delay controversy. Is the EU adjusting timelines based on legitimate implementation concerns, or is it bowing to pressure from a foreign government protecting its dominant technology sector?

The fact that the Commission’s position shifted dramatically between July 2024 when a spokesperson dismissed pause calls and March 2026 when major delays were proposed suggests external pressures played some role. Whether those pressures were primarily from Big Tech lobbying, US government influence, or genuine implementation challenges remains debated.

Parliamentary Opposition: Bowing Down to Tech Bros

Inside the European Parliament, critics of the Digital Omnibus proposal have used unusually sharp language to express their opposition. The phrase bowing down to tech bros appeared in multiple Parliamentary statements, reflecting anger at what critics see as regulatory capture.

The AI Act went through years of negotiation and compromise before passage in 2024. Those negotiations already incorporated industry feedback and balanced various interests. To reopen core timelines within two years of passage, before any actual enforcement has occurred, strikes critics as abandoning hard-won protections under lobbying pressure.

Some Members of European Parliament argue that delays send exactly the wrong signal about EU commitment to AI governance. If major provisions get postponed before they even take effect, what credibility does the regulatory framework retain?

The political battle between the Commission and Parliament over these delays is not resolved currently. The outcome will significantly shape both the actual implementation timeline and the broader perception of whether the EU can maintain strong AI governance in the face of industry and geopolitical pressure.

My sense, based on tracking implementation across 27 member states but recognizing each organization’s situation differs, is that some form of delay will likely pass given the legitimate concerns about standards readiness. However, the final timeline may be shorter than the Commission proposed and could include stronger AI Act enforcement commitments to balance the extension.

How the EU AI Act Affects Businesses: Compliance Requirements by Company Type

Understanding how the AI Act actually affects your business requires looking beyond general principles to specific obligations based on what role you play in the AI ecosystem. I have broken this down by stakeholder type because requirements differ significantly depending on whether you develop AI, deploy it, or import it into Europe.

If You Develop or Provide AI Systems

AI system providers face the most extensive compliance obligations under the Act. If you develop AI systems that will be placed on the EU market or put into service in the EU, you need to understand these requirements regardless of where your company is located.

For high-risk AI systems, you must establish a quality management system covering the entire AI lifecycle. This includes AI risk assessment and mitigation processes following EU specifications, data governance ensuring training datasets are relevant and free from bias, AI technical documentation demonstrating how your system meets requirements, automatic logging of events, and transparency provisions enabling users to understand system outputs.

You also must ensure appropriate levels of accuracy, robustness, and cybersecurity throughout your AI system’s lifecycle. Human oversight measures need to be designed into the system architecture, not just added afterward.

Before placing a high-risk AI system on the market, you must undergo conformity assessment AI procedures. Depending on your system’s specific use case, this might involve third-party assessment or self-assessment following detailed conformity protocols.

Once your system is on the market, you must register it in the EU database for high-risk AI systems maintained by the European AI Office. This registration requirement creates public visibility and accountability for high-risk applications.

The watermarking requirement that took effect in 2025 means any AI-generated content from your systems must be clearly labeled as such. Users need to know when they are viewing, reading, or hearing AI-generated material rather than human-created content.

For foundation models regulation including large language models, you must prepare detailed summaries of training data including copyrighted content. You need AI technical documentation covering model architecture, training processes, and capabilities. Systems must be designed to ensure outputs respect EU copyright law.

I know from watching implementation attempts that the copyright disclosure requirement creates genuine technical challenges. Some AI developers argue it is technologically infeasible to provide content summaries given how modern training datasets are constructed from vast internet scrapes. This remains an area of active tension between regulatory requirements and technical reality.

If You Deploy or Use AI Systems in Your Business

AI deployment rules establish distinct obligations for deployers compared to providers. If you use AI systems supplied by others in a professional context, you are considered a deployer under the Act.

For high-risk AI systems, you must ensure appropriate human oversight during system operation. This means having qualified personnel who can understand the system’s outputs, interpret its operation, and intervene when necessary.

You need to monitor the AI system’s operation according to the system provider’s instructions. If you detect any serious incidents or malfunctioning, you must inform the provider and suspend use until the issue is resolved.

Input data governance is your responsibility as a deployer. You must ensure data fed into the system is relevant to the intended purpose and compliant with provider specifications.

Transparency toward affected persons is critical. If your high-risk AI system makes decisions affecting individuals, those people generally have a right to be informed that AI is being used and to understand the logic behind decisions that significantly affect them.

You must maintain logs generated by high-risk AI systems for timeframes specified in the regulation. These logs serve as accountability mechanisms if questions arise about system performance or decisions.

Many businesses wrongly assume buying AI tools from established providers means they have no compliance obligations. This is incorrect. Deployers have substantial responsibilities even when they do not develop the underlying systems.

If You Are Outside the EU But Serve EU Customers

Geographic location does not exempt you from EU AI Act requirements. The regulation has extraterritorial reach similar to GDPR, applying based on where AI systems are used rather than where companies are headquartered.

If you are a US, Asian, or other non-EU company and your AI system is placed on the EU market, deployed within the EU, or produces outputs used in the EU, you must comply with applicable provisions.

As OpenAI’s compliance commitment demonstrates, major US technology companies cannot simply ignore EU rules if they want to serve European customers.

In practice, you have two options. You can establish an authorized representative in the EU who handles compliance matters on your behalf. This representative becomes your point of contact with regulators and takes on certain legal responsibilities.

Alternatively, you can establish a legal entity within the EU that takes on provider or deployer obligations directly. Many non-EU companies are setting up European subsidiaries partly to manage EU AI Act compliance more effectively.

The Brussels Effect means that even if you primarily serve non-EU markets, you might choose to comply with EU AI Act standards because they represent the strictest global requirements. Building systems that meet EU standards ensures you can enter any market rather than maintaining separate compliance frameworks for different regions.

How to Assess If Your AI System Is High-Risk

People frequently ask me how to determine whether their AI system qualifies as high-risk. The regulation defines high-risk categories based on use case rather than technical characteristics.

AI systems used as safety components in critical infrastructure including road traffic, water, gas, heating, and electricity supply are considered high-risk. If your AI helps manage infrastructure where failures could endanger public safety, it is high-risk.

Educational and vocational training applications that determine access to education, assess students, or influence educational pathways are high-risk. This includes AI systems that help make admission decisions or significantly influence grading and evaluation.

Employment systems that screen job applications, evaluate candidates during interviews, make hiring or promotion decisions, monitor worker performance, or influence contract termination are all high-risk. The employment category is particularly broad and catches many HR technology tools.

Access to essential private and public services falls into the high-risk category. This includes AI that evaluates creditworthiness for loans, determines eligibility for public benefits, assesses emergency response priorities, or makes similar access decisions.

Law enforcement applications are almost universally high-risk, including systems for individual risk assessment, polygraph analysis, emotion recognition for law enforcement purposes, and detecting deep fakes as evidence.

Migration and border control systems that assess visa applications, verify documents, or assist in asylum and immigration decisions are high-risk.

Administration of justice applications including AI that assists in legal interpretation, case research, or helps determine sentences are high-risk due to their impact on fundamental rights.

If your AI system falls into any of these categories, you need to start preparing for high-risk compliance obligations regardless of whether deadlines are August 2026 or December 2027. The delay does not change whether your system is classified as high-risk.

The Compliance Dilemma: Rush to Comply or Wait for Final Standards

A strategic question frustrates compliance teams across the industry. Should you rush to implement compliance measures now despite uncertainty about final technical standards? Or should you wait for standards to be finalized and risk being unable to comply in time?

Neither option is clearly correct. The delayed and uncertain timeline creates this problem.

If you rush to comply based on current understanding, you might build systems that do not match final technical standards when they are eventually published. This wastes resources and could require expensive rebuilding later.

If you wait for final standards, you face what AI policy analysts call the non-compliance overnight risk. Complex AI systems cannot be retrofitted quickly. If you wait until December 2026 to start compliance work for a December 2027 deadline, you might find that 12 months is not enough time to properly rebuild data governance, implement human oversight mechanisms, create AI technical documentation, and undergo conformity assessment.

My recommendation, based on tracking implementation across 27 member states but recognizing each organization’s situation differs, is to consider a middle path approach. Consult with legal counsel familiar with your specific use cases before making compliance decisions.

Start foundational regulatory compliance AI work now on aspects that will not change regardless of final standards. Build your quality management framework. Begin cataloging your AI systems and classifying them by risk tier. Start improving data governance and documentation practices.

These foundational elements will be required no matter what final technical standards specify. You are not wasting effort by building this infrastructure now.

For specific technical implementations that depend on harmonized standards, maintain flexibility. Design systems with the expectation that you will need to adjust them when standards are finalized. Build in time buffers and resource reserves for adaptation.

Most importantly, do not assume the delays mean you can ignore this entirely until 2027. That is the surest path to non-compliance when deadlines actually hit.

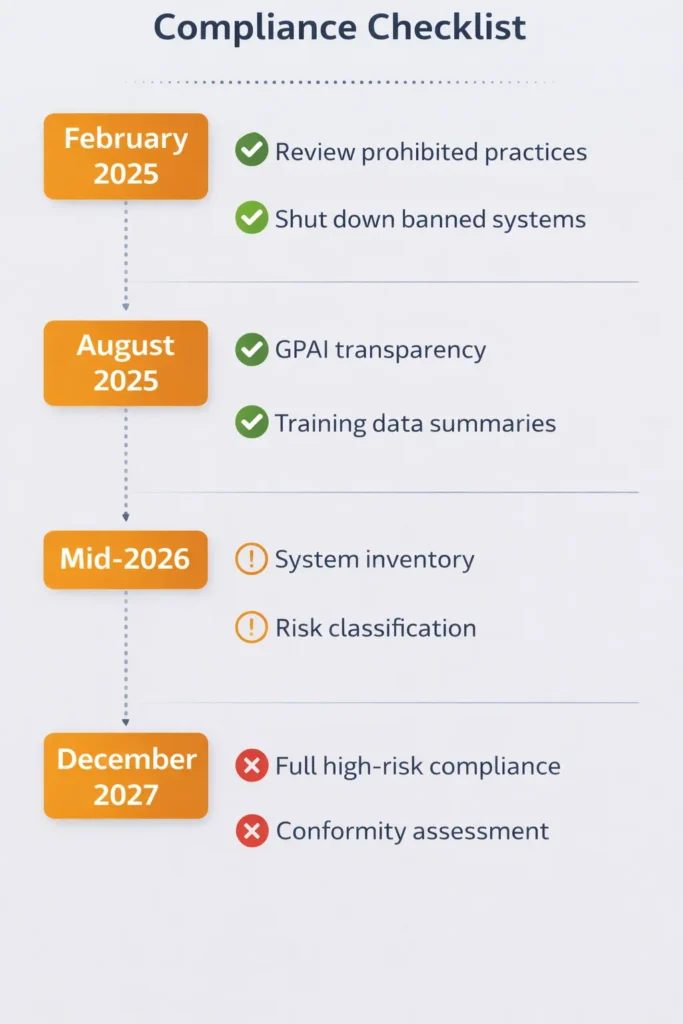

EU AI Act Compliance Checklist by Deadline

Here is a practical compliance checklist organized by key AI compliance deadlines, both those that have passed and those coming up.

For February 2, 2025, which has already passed, you needed to ensure your AI systems do not engage in any prohibited AI practices. Review all your AI applications for social scoring systems, real-time biometric surveillance, cognitive manipulation, or creation of non-consensual intimate imagery. If any of your systems did these things, they needed to be shut down or fundamentally redesigned by this date.

For August 2, 2025, also already passed, if you provide general-purpose AI models, you needed to publish training data summaries, create technical documentation, and implement copyright compliance measures. If you have not done this yet and you operate GPAI systems in the EU market, you are currently non-compliant.

For mid to late 2026, regardless of whether delays are finalized, you should complete AI system inventory and risk classification. Know which of your systems qualify as high-risk. Establish your quality management framework. Begin data governance improvements. Start creating technical documentation. Identify human oversight requirements for high-risk systems.

For December 2027, assuming delays pass, all high-risk AI systems must be fully compliant with documentation, conformity assessment, database registration, human oversight, accuracy requirements, logging systems, and cybersecurity measures in place. This is the hard deadline for complete high-risk compliance.

If delays do not pass and the original August 2026 timeline holds, all those high-risk requirements would need to be ready much sooner. This uncertainty is exactly why you cannot afford to wait until you know the final deadline.

The 4-Tier Risk Classification System Explained

The entire AI regulatory framework architecture rests on classifying AI systems into four risk tiers with different regulatory treatments. Understanding which tier your AI falls into is the first step toward knowing what obligations you face.

Unacceptable Risk: AI Practices Banned Completely

The highest risk tier consists of AI applications deemed so dangerous to fundamental rights that they are prohibited entirely. These bans are absolute with very narrow exceptions. They have been enforceable since February 2025 with no delays.

Social scoring systems that evaluate or classify people based on their social behavior, personal characteristics, or personality traits are completely banned. This prohibition applies to both government and private sector applications. The EU explicitly rejected China-style social credit systems as incompatible with European values.

After the March 2026 Parliamentary vote, the social scoring ban was extended to explicitly cover private companies, closing a potential loophole. Companies cannot create internal social scoring systems for employees or customers.

You can still use performance metrics based on job-related factors. Evaluating a salesperson based on sales numbers is fine. Evaluating them based on their social connections, personal habits, or personality traits derived from AI analysis of their behavior is prohibited.

This prohibition is in effect now. If your organization operates any kind of social scoring system, it needed to be shut down by February 2025. Continued operation exposes you to the maximum AI Act penalties under the Act.

Real-Time Biometric Surveillance in Public Spaces

Real-time remote biometric identification systems in publicly accessible spaces face a general prohibition. This primarily targets live facial recognition systems that scan crowds in public areas to identify individuals.

The prohibition is not absolute. There are three narrow exceptions for law enforcement: searching for specific victims of crime including missing children, preventing genuine and present or genuine and foreseeable terrorist threats, and detecting or identifying suspects of specific serious crimes.

Even these exceptions require prior judicial or administrative authorization, strict necessity, and appropriate limitations in time and space. Law enforcement cannot simply deploy permanent facial recognition systems across a city.

The keyword is real-time. The prohibition specifically targets live scanning as opposed to post-event analysis of recorded footage. Using facial recognition to analyze security footage after a crime occurred faces different rules than deploying live systems scanning people as they walk down the street.

For private companies, this prohibition is essentially absolute. You cannot deploy real-time facial recognition in shopping centers, offices, public spaces, or anywhere else that is publicly accessible. The narrow law enforcement exceptions do not apply to private actors.

This has already impacted some businesses that were experimenting with facial recognition for customer identification or security purposes. Those systems needed to be shut down or fundamentally redesigned to avoid real-time public identification.

Cognitive Behavioral Manipulation

AI systems that deploy subliminal techniques beyond a person’s consciousness or purposefully manipulative techniques to distort behavior in ways that cause significant harm are prohibited.

The regulation specifically calls out exploiting vulnerabilities of specific groups due to their age or physical or mental disability. The example I keep coming back to is voice-activated toys that use AI to encourage dangerous behavior in children by exploiting their developmental vulnerabilities.

An AI system that uses psychological profiling to manipulate someone into making financial decisions against their interests could fall into this category. Systems designed to exploit gambling addiction, eating disorders, or other vulnerabilities through targeted manipulation are prohibited.

The challenge here is distinguishing prohibited manipulation from permitted persuasion. Advertising has always involved persuasion. Recommendation systems influence behavior. When does influence cross the line into prohibited manipulation?

The key factors are whether the system exploits specific vulnerabilities, whether it operates beyond conscious awareness through subliminal techniques, and whether it causes significant harm. All three elements generally need to be present for a prohibition.

This is a harder prohibition to enforce than social scoring or facial recognition because manipulation can be subtle. But the prohibition exists, and systems designed primarily to manipulate vulnerable people into harmful decisions violate it.

Nudifier Apps and Non-Consensual Deepfakes

The prohibition on AI systems that create or manipulate image, audio, or video content to fabricate or alter non-consensual intimate imagery is one of the newest additions, actively enforced starting February 2026.

Nudifier apps specifically use AI to digitally remove clothing from photos, creating synthetic intimate images without the subject’s consent. These applications violate personal dignity and privacy in obvious ways.

The prohibition extends beyond nudifier apps to other non-consensual intimate imagery created through AI manipulation. Deepfake pornography using someone’s face without permission falls under this ban.

What is permitted is consensual creative uses of AI image generation and manipulation. Creating synthetic images or videos with the knowing consent of everyone depicted does not violate this prohibition. The key element is the non-consensual nature of the creation.

Enforcement of this prohibition has begun with authorities targeting websites and apps that offer nudifier services. Providers face removal from app stores and potential AI Act sanctions under the Act.

If your company creates AI tools that could be used for image or video manipulation, you need clear terms of service prohibiting non-consensual intimate imagery creation and technical measures to prevent abuse of your tools for this purpose.

These four categories represent the AI practices considered so harmful that they are banned entirely rather than regulated. The prohibitions are in effect now with full enforcement, and penalties for violations are severe. Any business using AI needs to ensure none of their applications fall into these prohibited categories.

High Risk: AI Systems Requiring Strict Compliance

The next tier consists of high-risk AI systems that are permitted but face extensive regulatory requirements. These are applications where AI could significantly impact health, safety, or fundamental rights.

AI used as safety components of critical infrastructure falls here. If your AI system manages electrical grids, water supply, transportation networks, or similar infrastructure where failures could endanger public safety, you are in the high-risk category.

Educational applications that determine access to educational institutions or influence educational pathways are high-risk. This includes AI systems used in student admission processes, proctoring and assessment tools that significantly influence grades, and systems that guide educational or career pathway recommendations.

The employment and worker management category is particularly broad. AI systems that screen job applications, conduct or assist in candidate interviews, make or support hiring decisions, evaluate worker performance, or influence promotion or termination decisions all qualify as high-risk. Many HR technology tools fall into this category.

Access to essential services represents another major high-risk area. AI that evaluates creditworthiness for loans, assesses eligibility for public benefits like healthcare or social assistance, determines emergency response priorities, or makes similar access decisions faces high-risk requirements.

Law enforcement applications are almost always high-risk, including individual risk assessment tools, polygraph analysis, emotion recognition for law enforcement, deep fake detection systems, and similar applications that affect justice and law enforcement outcomes.

Migration and border control systems that verify travel documents, assess visa or asylum applications, examine complaints about fundamental rights violations, or support border control decisions are high-risk.

Administration of justice AI including tools that assist courts in legal interpretation, case research, or sentencing recommendations fall into the high-risk category given their impact on legal rights.

For all these high-risk systems, providers must: build quality management systems, conduct risk assessments, ensure data governance, create extensive technical documentation, implement logging systems, provide user transparency, enable human oversight, maintain accuracy and robustness, and ensure cybersecurity. They must undergo conformity assessment and register systems in the EU database.

Deployers of high-risk systems must monitor operation, ensure human oversight, use appropriate input data, and maintain logs. Both AI system providers and deployers face substantial compliance burdens for high-risk applications.

Limited Risk: Transparency Requirements for General Purpose AI

The third tier involves AI systems that need transparency but do not face the full compliance burden of high-risk systems. General purpose AI models are the primary example here.

Foundation models regulation applies to systems like ChatGPT, Claude, and other large language models in this category. They have broad capabilities and can be used for many purposes, making them difficult to classify as specifically high-risk or minimal-risk.

For general-purpose AI, the main requirement is transparency. These systems must clearly disclose to users that they are interacting with AI rather than a human. You have probably noticed chatbots now explicitly stating they are AI assistants at the beginning of conversations. This fulfills the AI transparency requirements.

Providers must also prepare and publish summaries of the content used to train these models. This includes copyrighted material, which has created significant controversy. Some AI developers claim it is technologically infeasible to provide meaningful summaries of training datasets constructed from massive internet scrapes.

Technical documentation must cover model architecture, training processes, capabilities, limitations, and risks. This documentation helps users understand what the model can and cannot reliably do.

Systems must implement measures to ensure outputs respect EU copyright law. This means avoiding reproduction of copyrighted content in ways that violate rights holders’ interests.

Algorithmic transparency requirements mean users should be able to understand at least at a high level how the system works, what data it was trained on, and what its limitations are.

Compared to high-risk systems, the compliance burden for general-purpose AI is lighter. There is no conformity assessment requirement, no database registration, and no extensive quality management system mandate. The focus is on transparency and copyright compliance rather than comprehensive risk management.

Minimal Risk: Unregulated AI Applications

The vast majority of AI applications fall into the minimal risk category and face essentially no specific regulatory requirements under the AI Act.

Spam filters that sort your email do not require AI Act compliance. Video game AI that controls non-player characters is unregulated. Recommendation systems that suggest products or content are minimal risk. Basic automation tools, scheduling algorithms, and simple decision support systems typically fall here.

These applications do not significantly impact fundamental rights, health, or safety. They are low-stakes uses of AI where regulatory intervention is not justified by the risks involved.

This minimal risk category is why I emphasize that the AI Act does not regulate all AI indiscriminately. The regulatory burden concentrates on applications that could genuinely harm people or violate their rights. The vast majority of innovation ecosystem AI development continues with minimal regulatory constraints.

Providers of minimal-risk AI systems are encouraged to adopt voluntary codes of conduct and follow best practices, but these are not legally mandated. The market can drive standards for low-risk applications without regulatory enforcement.

Understanding these four tiers is crucial because it determines what obligations you face. Most businesses will find that most of their AI applications fall into minimal risk with no special compliance requirements. It is the high-risk and general-purpose AI applications that require focused compliance attention.

EU AI Act Enforcement Challenges: Why Implementation Is Complex

Beyond the rules themselves, I have been watching closely how enforcement infrastructure develops because there is often a significant gap between regulations on paper and effective implementation in practice.

The European AI Office: Structure and Capacity

The European AI Office was established as the central EU body responsible for coordinating AI Act implementation and enforcement. It sits within the European Commission and serves as the primary authority for general-purpose AI oversight while coordinating with AI oversight bodies in member state regulators on other provisions.

Whether the AI Office has sufficient capacity to effectively oversee an AI ecosystem that includes thousands of companies developing countless AI systems concerns me. The Office is still building its staff and expertise currently.

The AI Office’s responsibilities include developing implementing acts and guidelines that provide detailed technical specifications beyond what the Act itself contains. These implementing acts are crucial because they translate high-level regulatory requirements into specific, actionable compliance steps.

The Office also coordinates with member state authorities to ensure consistent interpretation and AI Act enforcement across the EU. This coordination function is essential because the last thing businesses need is 27 different interpretations of the same requirements creating a fragmented compliance landscape.

For general-purpose AI models, the AI Office has direct supervisory authority. It can request documentation, conduct evaluations, and take enforcement actions against non-compliant providers. This centralized oversight makes sense for AI models that operate across all member states.

The Office maintains the EU database where high-risk AI systems must be registered. This database will eventually provide public visibility into what high-risk AI systems are being deployed across Europe, though it is still being built out as of 2026.

I am watching to see whether the AI Office can scale effectively as enforcement deadlines approach. Building regulatory expertise in a rapidly evolving technology field while coordinating across 27 countries is an enormous organizational challenge.

How EU Member States Are Implementing the AI Act

While the AI Office handles central coordination, individual EU member states must establish national competent authorities to enforce AI Act provisions within their territories. This is where implementation gets complicated.

Member states AI implementation varies significantly, with some moving quickly to designate authorities and build enforcement capacity. Others have been slower, creating an uneven landscape of regulatory readiness across Europe.

I have seen reports highlighting Poland’s challenges in establishing an effective AI regulator. The country struggled to staff its authority with technical experts who understand both AI systems and regulatory enforcement. This is not unique to Poland but illustrates a common problem.

National authorities must have the technical expertise to evaluate AI systems, the legal authority to conduct investigations and impose penalties, and the resources to monitor compliance across potentially thousands of companies. Building this capacity from scratch takes time and money that many member states are still working to secure.

Coordination between national authorities and the European AI Office remains a work in progress. Clear protocols are needed for which authority handles which cases, how information gets shared, and how enforcement decisions are coordinated to avoid conflicting approaches.

Businesses operating across multiple EU countries need consistency. If France interprets a requirement one way, Germany another way, and Italy a third way, companies face impossible compliance situations. Harmonization is critical but proving difficult to achieve.

I expect implementation variance across member states to remain a challenge through at least 2027. Some countries will have robust enforcement while others lag behind, creating regulatory arbitrage opportunities that undermine the Act’s effectiveness.

The Standards Gap: Waiting for Technical Specifications

One of the most legitimate criticisms of the current implementation timeline is that harmonized technical standards are not ready yet. These standards are essential because they provide the detailed specifications companies need to know exactly what compliance requires.

The Act references standards bodies like CEN and CENELEC that must develop these technical specifications. Standards development is a slow, consensus-based process involving industry stakeholders, technical experts, and regulators. It cannot be rushed without sacrificing quality.

Without finalized standards, companies face genuine uncertainty about compliance. The Act might require robust data governance for high-risk AI, but what specifically does robust mean? What documentation formats are acceptable? What testing methodologies demonstrate compliance?

This standards gap is the Commission’s primary justification for delaying high-risk system deadlines to December 2027. They argue that enforcing requirements before companies have clear technical specifications about how to meet them would be unfair and counterproductive.

I see validity in this argument even while recognizing that delays also serve industry interests. Standards genuinely are not ready, and companies genuinely need them for effective compliance.

The challenge is ensuring that standards development does not become a perpetual excuse for further delays. At some point, good enough standards must be accepted even if they are not perfect, or enforcement will never actually begin.

Enforcement Reality Check: Will Anyone Actually Be Fined?

When will we see significant AI Act enforcement actions?

As of April 2026, no major AI Act penalties have been imposed. Enforcement so far has focused entirely on guidance, stakeholder engagement, and helping companies understand requirements. This is normal for new regulations, but it creates a situation where rules exist on paper without practical enforcement teeth.

The gap between theoretical penalties and actual enforcement capacity concerns me. Article 99 establishes the penalty structure with specific percentages tied to global revenue, mirroring GDPR’s Article 83 approach that resulted in €4.3 billion in fines from May 2018 through December 2025. The Act authorizes fines up to 35 million euros or 7% of global annual revenue for the most serious violations. These are potentially massive penalties that could destroy companies.

Imposing such penalties requires technical investigations proving non-compliance, legal proceedings respecting due process rights, and regulatory authorities with the resources and expertise to build enforceable cases. This takes time and capacity that many authorities do not yet have.

Based on GDPR enforcement patterns from 2018-2020 and early AI Act enforcement activities in Q1 2026, I expect the first wave of enforcement will likely target clear-cut violations of prohibited AI practices rather than complex high-risk compliance failures. It is much easier to prove someone is operating a banned social scoring system than to demonstrate that a high-risk AI system’s data governance framework is inadequate.

The enforcement reality also depends on political will. Will regulators actually impose the maximum penalties authorized under the Act, or will they take a softer approach focused on compliance assistance and warnings? Early enforcement decisions will set important precedents.

My prediction is that we will not see significant enforcement actions until late 2027 or 2028, after high-risk deadlines have passed and authorities have built sufficient capacity. This means businesses have a window where formal requirements exist but practical enforcement is limited. Whether you use that window to prepare properly or to delay action is a crucial strategic choice.

4 Prohibited AI Practices Banned Right Now Since February 2025

While high-risk requirements face delays, prohibitions on unacceptable-risk AI systems have been in effect since February 2025. What is banned right now? These prohibitions carry the highest penalties with no grace period.

1. Social Scoring Systems Public and Now Private Sector

Social scoring systems that evaluate or classify people based on their behavior, personal characteristics, or predicted characteristics are completely prohibited throughout the EU under Article 5(1)(c) of Regulation (EU) 2024/1689. This applies to both government and private sector applications.

The classic example is China’s social credit system where people receive scores based on their social behavior, financial history, and personal relationships. These scores then affect access to services, travel permissions, and social opportunities. The EU explicitly rejected this model as incompatible with European values.

After the March 2026 Parliamentary vote, the social scoring ban was extended to explicitly cover private companies, closing a potential loophole. A company cannot rate employees based on their social media activity, personal relationships, or lifestyle choices in ways that affect employment decisions.

You can still use performance metrics based on job-related factors. Evaluating a salesperson based on sales numbers is fine. Evaluating them based on their social connections, personal habits, or personality traits derived from AI analysis of their behavior is prohibited.

If your organization operates any kind of social scoring system, it needed to be shut down by February 2025. Continued operation exposes you to the maximum penalties under the Act.

2. Real-Time Biometric Surveillance in Public Spaces

Real-time remote biometric identification systems in publicly accessible spaces face a general prohibition. This primarily targets live facial recognition systems that scan crowds in public areas to identify individuals.

The prohibition is not absolute. There are three narrow exceptions for law enforcement: searching for specific victims of crime including missing children, preventing genuine and present or genuine and foreseeable terrorist threats, and detecting or identifying suspects of specific serious crimes.

Even these exceptions require prior judicial or administrative authorization, strict necessity, and appropriate limitations in time and space. Law enforcement cannot simply deploy permanent facial recognition systems across a city.

The keyword is real-time. The prohibition specifically targets live scanning as opposed to post-event analysis of recorded footage. Using facial recognition to analyze security footage after a crime occurred faces different rules than deploying live systems scanning people as they walk down the street.

For private companies, this prohibition is essentially absolute. You cannot deploy real-time facial recognition in shopping centers, offices, public spaces, or anywhere else that is publicly accessible. The narrow law enforcement exceptions do not apply to private actors.

This has already impacted some businesses that were experimenting with facial recognition for customer identification or security purposes. Those systems needed to be shut down or fundamentally redesigned to avoid real-time public identification.

3. Cognitive Behavioral Manipulation

AI systems that deploy subliminal techniques beyond a person’s consciousness or purposefully manipulative techniques to distort behavior in ways that cause significant harm are prohibited.

The regulation specifically calls out exploiting vulnerabilities of specific groups due to their age or physical or mental disability. The example I keep coming back to is voice-activated toys that use AI to encourage dangerous behavior in children by exploiting their developmental vulnerabilities.

An AI system that uses psychological profiling to manipulate someone into making financial decisions against their interests could fall into this category. Systems designed to exploit gambling addiction, eating disorders, or other vulnerabilities through targeted manipulation are prohibited.

The challenge here is distinguishing prohibited manipulation from permitted persuasion. Advertising has always involved persuasion. Recommendation systems influence behavior. When does influence cross the line into prohibited manipulation?

The key factors are whether the system exploits specific vulnerabilities, whether it operates beyond conscious awareness through subliminal techniques, and whether it causes significant harm. All three elements generally need to be present for a prohibition.

This is a harder prohibition to enforce than social scoring or facial recognition because manipulation can be subtle. But the prohibition exists, and systems designed primarily to manipulate vulnerable people into harmful decisions violate it.

4. Nudifier Apps and Non-Consensual Deepfakes

The prohibition on AI systems that create or manipulate image, audio, or video content to fabricate or alter non-consensual intimate imagery is one of the newest additions, actively enforced starting February 2026.

Nudifier apps specifically use AI to digitally remove clothing from photos, creating synthetic intimate images without the subject’s consent. These applications violate personal dignity and privacy in obvious ways.

The prohibition extends beyond nudifier apps to other non-consensual intimate imagery created through AI manipulation. Deepfake pornography using someone’s face without permission falls under this ban.

What is permitted is consensual creative uses of AI image generation and manipulation. Creating synthetic images or videos with the knowing consent of everyone depicted does not violate this prohibition. The key element is the non-consensual nature of the creation.

Enforcement of this prohibition has begun with authorities targeting websites and apps that offer nudifier services. Providers face removal from app stores and potential penalties under the Act.

If your company creates AI tools that could be used for image or video manipulation, you need clear terms of service prohibiting non-consensual intimate imagery creation and technical measures to prevent abuse of your tools for this purpose.

These four categories represent the AI practices considered so harmful that they are banned entirely rather than regulated. The prohibitions are in effect now with full enforcement, and penalties for violations are severe. Any business using AI needs to ensure none of their applications fall into these prohibited categories.

What Happens If You Do Not Comply? Penalties and Real Consequences

Understanding potential consequences for non-compliance helps businesses take EU AI Act news seriously rather than treating them as theoretical concerns.

AI Act Penalties: Fines Up to 7% of Global Revenue

The AI Act establishes a tiered penalty structure based on violation severity. The maximum fines are substantial enough to pose existential threats to companies.

For violations of prohibited AI practices, meaning using banned systems like social scoring or real-time biometric surveillance, fines can reach up to 35 million euros or 7% of total worldwide annual revenue from the preceding financial year, whichever amount is higher.

That 7% of global revenue standard is significant. For a large multinational technology company, this could mean penalties in the billions of euros. Even for smaller companies, 35 million euros is a company-ending fine.

For violations of other AI Act provisions including most high-risk system requirements, fines reach up to 15 million euros or 3% of total worldwide annual revenue, whichever is higher. This applies to failures in data governance, inadequate human oversight, missing documentation, and similar high-risk compliance failures.

For supplying incorrect, incomplete, or misleading information to authorities, fines go up to 7.5 million euros or 1.5% of total worldwide annual revenue, whichever is higher. This means trying to hide non-compliance through deception could itself trigger significant AI Act sanctions.

These penalty amounts mirror GDPR’s structure. According to CMS GDPR Enforcement Tracker data through March 2026, GDPR enforcement has resulted in €4.38 billion in penalties across 2,092 enforcement actions, with Amazon’s €746 million fine (2021) and Meta’s €1.2 billion fine (2023) representing the largest actions. This shows that EU regulators are willing to impose massive fines for serious violations.

The AI Act gives authorities similar penalty powers, suggesting they will use them when they identify serious violations.

Non-Financial Consequences: Bans and Reputational Risk

Beyond financial penalties, non-compliance can trigger other serious consequences that might be even more damaging than fines.

Authorities can order the withdrawal of non-compliant AI systems from the market. If your product is fundamentally non-compliant, you might be forced to stop selling it entirely throughout the EU until compliance is achieved.

For serious violations, authorities could ban you from placing AI systems on the EU market for specified periods. This is a business death sentence for AI companies that depend on European market access.

Reputational damage from being publicly identified as an AI Act violator could be severe. In an environment where consumers and businesses increasingly care about responsible AI, being known for non-compliance damages brand value and customer trust.

Contractual consequences matter too. Many business contracts now include AI compliance representations and warranties. If you breach these contractual terms through AI Act violations, your customers could terminate contracts, demand damages, or refuse future business.

Litigation risk increases with non-compliance. Individuals harmed by non-compliant AI systems might sue for damages under national laws implementing the AI Act’s liability provisions. Class action mechanisms in some member states could aggregate individual claims into massive liability exposure.

Insurance complications are emerging as well. Some cyber liability and professional indemnity insurance policies now exclude coverage for AI Act violations or charge significant premiums for coverage. Non-compliance could leave you uninsured against AI-related risks.

Enforcement Reality as of 2026: Who Has Been Fined?

As of April 2026, no major AI Act fines have been imposed yet. Enforcement is still in its early guidance-focused phase rather than active penalty imposition.

This does not mean enforcement will not happen. We are in the initial period where authorities focus on helping businesses understand requirements, providing guidance, and building enforcement capacity.

GDPR followed a similar pattern. The regulation took effect in May 2018, but most major enforcement actions came in 2019 and later as authorities built cases and completed investigations. I expect AI Act enforcement to follow this same trajectory.

The first enforcement actions will likely target clear violations of prohibited AI practices rather than complex disputes about high-risk system compliance adequacy. Proving someone operates a banned social scoring system is straightforward. Demonstrating that a company’s data governance framework for a high-risk AI system is insufficient involves technical judgments that take time to establish.

Some early enforcement has focused on ordering removal of specific AI applications from the market based on prohibited practice violations. These administrative actions do not generate the headlines that massive fines would but represent the beginning of active enforcement.

Do not mistake the current absence of major penalties for a lack of enforcement risk. Authorities are building capacity, developing enforcement strategies, and identifying targets. When enforcement ramps up in late 2027 and 2028, companies that have not prepared will face serious exposure.

The smart approach is treating compliance as essential despite limited current enforcement rather than gambling that enforcement will never materialize.

How the EU AI Act Compares to US and Global AI Regulation

The EU AI Act does not exist in isolation but as part of a global AI governance landscape with different regulatory approaches across major jurisdictions.

EU vs. US Approaches and the Brussels Effect

The fundamental difference between EU and US approaches is comprehensiveness versus fragmentation.

The EU adopted one AI Act covering all sectors through a unified risk-based framework. Whether you are developing healthcare AI, financial services AI, or employment AI, you face the same basic regulatory structure with risk-tier classifications determining your obligations.

The United States has taken a sector-specific approach without comprehensive federal AI legislation. Healthcare AI falls under FDA medical device frameworks. Financial services AI falls under banking regulators. Employment AI faces EEOC scrutiny. Consumer AI is monitored by the FTC.

This fragmented US approach means AI developers must navigate multiple regulatory frameworks. Some US states have passed their own AI regulations, creating further fragmentation. Colorado enacted AI transparency requirements. California is considering various AI bills.

The Biden administration issued an executive order on AI in October 2023 that directed federal agencies to develop AI governance within their domains, but this reinforced the sector-specific approach rather than creating comprehensive legislation.

Despite different approaches, both jurisdictions grapple with similar core concerns about AI safety, transparency, and fundamental rights protection.

However, Brussels AI regulation often becomes de facto global standards even for non-EU companies. GDPR demonstrated this Brussels Effect powerfully. When GDPR took effect in 2018, many companies applied GDPR-level data protection globally rather than maintaining different systems.

The AI Act will likely follow this pattern. As OpenAI’s compliance commitment demonstrates, EU standards shape global approaches. Europe’s market size (450 million consumers) makes compliance necessary. The engineering complexity and reputational risk of maintaining different versions for different markets often favors building to the highest standard everywhere.

This regulatory influence extends beyond direct compliance. Even companies that do not currently operate in Europe might adopt EU-aligned standards as best practices or in anticipation of eventual European market entry.

The Brussels Effect means that EU AI Act standards are likely to influence global AI development regardless of what other jurisdictions do. Europe may not lead in AI innovation metrics, but it is shaping the regulatory environment that governs global AI development.

EU AI Act Impact on European Innovation: Industry vs Regulator Perspectives

This question sits at the heart of the fiercest debates about EU AI policy. I have followed the arguments on both sides closely because they reveal fundamentally different perspectives on regulation’s role in technology development.

The Industry Warning: Over-Regulation Drives Talent to US and Asia

Major European companies including Siemens and Airbus raised concerns in an open letter that the AI Act might jeopardize European competitiveness. Their argument centers on regulatory burden creating disadvantages against competitors in less regulated markets.

The competitiveness concern has several dimensions. Compliance costs money and diverts engineering resources from innovation to regulatory requirements. Smaller startups with limited resources might struggle more than established companies, potentially tilting markets toward incumbents.

Talented AI researchers and developers might choose to work in environments with fewer regulatory constraints. If the best opportunities for cutting-edge AI development exist in Silicon Valley or Shenzhen rather than Europe, that is where talent will flow.

Investment capital might favor less regulated markets as well. Venture capital seeks maximum return potential, and regulatory compliance costs reduce returns. Some evidence suggests AI investment is indeed flowing more toward the US and China than Europe.

Business lobbies argue the Act represents over-regulation that drives startups to establish themselves in the US or Asia rather than Europe. This brain drain and capital flight could leave Europe falling further behind in AI capabilities.

The innovation ecosystem concern is real. Europe already lags the US and China in major AI company formation, AI talent concentration, and AI investment. Whether the AI Act accelerates or reverses this trend will shape Europe’s technological future.

The Regulator’s Case: Seatbelts Did Not Stop Car Innovation

EU Commissioner Thierry Breton offered a compelling counter-argument using the automobile analogy. When cars were invented, governments eventually imposed regulations including speed limits, seatbelt requirements, safety standards, and emission controls.

Did these regulations stop automotive innovation? Obviously not. The global automobile industry continued thriving, developing safer and more advanced vehicles. Regulation did not kill innovation but channeled it in socially beneficial directions.

Breton argues that AI regulation works the same way. Clear rules about what is acceptable build public trust that enables broader adoption. Without rules, public backlash against harmful AI uses could create much worse constraints on the entire sector.

The trust-enabling function of regulation is important. If AI systems regularly discriminate, violate privacy, or cause other harms without accountability, public and political pressure could lead to much more restrictive interventions. Reasonable regulation prevents this race to regulatory bottom.

Standards can actually accelerate innovation by creating clear expectations. When companies know what compliance requires, they can design accordingly from the start rather than navigating complete uncertainty.

The competitive concern might also be overstated. European companies that build AI systems meeting the highest global standards could have advantages in international markets where trust and safety matter. Being known for responsible AI could be a competitive edge rather than a disadvantage.

From this perspective, the AI Act is not anti-innovation but pro-responsible innovation that can sustainably grow because it maintains public trust and political support.

Early Evidence: Who Is Winning the AI Race?

As of 2026, we are still too early to definitively answer whether the AI Act helps or hurts European innovation. The major provisions have not even been enforced yet, so we cannot measure their actual impact.

Some early indicators suggest continued challenges for European AI competitiveness. The largest AI model providers remain American companies like OpenAI, Anthropic, and Google or Chinese companies like Baidu and Alibaba. European companies have not produced equivalents to ChatGPT or Claude.